In January 2017, Denmark’s Foreign Minister Anders Samuelsen declared that Silicon Valley’s tech giants had become ‘a new kind of nation.’ Within months, Denmark appointed the world’s first Tech Ambassador to Silicon Valley, formally acknowledging that Big Tech companies exercised influence requiring diplomatic engagement. At the time, the move was celebrated as innovation. In retrospect, it looks more like capitulation.

By treating Big Tech as a diplomatic actor, Denmark implicitly accepted a transformation already underway: international politics now operates through a hybrid system of authority. States retain formal sovereignty, citizens have gained unprecedented communicative power, and private corporations exercise invisible gatekeeping control over the infrastructures through which diplomacy increasingly occurs. Unlike states, however, platforms govern these digital territories without democratic mandate, legal reciprocity or meaningful accountability.

The phenomenon of corporate actors exercising quasi-sovereign power over political communication is not new. The East India Company governed territories, raised armies, and conducted its own diplomacy. Nineteenth-century telegraph monopolies controlled the speed and reach of international information flows, shaping what states could communicate and when. Twentieth-century media empires determined which conflicts commanded public attention and which disappeared from view. What distinguishes the present moment is not corporate influence over diplomacy, but its scale, automation, and totality.

This is the ghost in the machine of contemporary international politics: not artificial intelligence achieving autonomy, but corporate power quietly exercising sovereign-like functions while remaining obscured by technical neutrality. The distinction matters less than it initially appears. The algorithms shaping diplomatic visibility are AI systems: machine learning models trained on engagement data, optimised for attention, and embedded with the biases of their training sets. The ghost and the machine are not separate phenomena. They are the same thing.

Reconstructing Diplomatic Practice

Traditional diplomatic theory assumes sovereignty exercised through institutions constrained by law, reciprocity and protocol. These pillars have not disappeared. They have been reassembled within digital environments governed not by international law, but by corporate policy and algorithmic design. What has changed is not diplomacy’s function, but who controls the conditions under which it operates.

The transformation of diplomatic signalling illustrates this shift. In late 2017, Donald Trump used X (then called Twitter) to simultaneously taunt Kim Jong Un as ‘Little Rocket Man’ and publicly undercut his own Secretary of State. The platform, not the State Department, became the primary arena of US nuclear diplomacy. X’s algorithm, an AI recommendation system designed to maximise user engagement, determined which audiences saw these messages, in what sequence, and alongside which interpretations. Emotionally charged content was amplified; measured diplomatic language struggled for visibility. The algorithm did not merely transmit diplomacy. It curated it, reshaping crisis dynamics according to commercial logic rather than strategic intent.

Contemporary diplomacy now requires hybrid capacity: the ability to operate simultaneously within state-controlled institutions and platform-mediated spaces. South Korean president Moon Jae-in’s shuttle diplomacy succeeded precisely because it translated Trump’s digital signals into institutional frameworks.

States are increasingly acting as interpreters of platform politics rather than sole authors of diplomatic meaning.

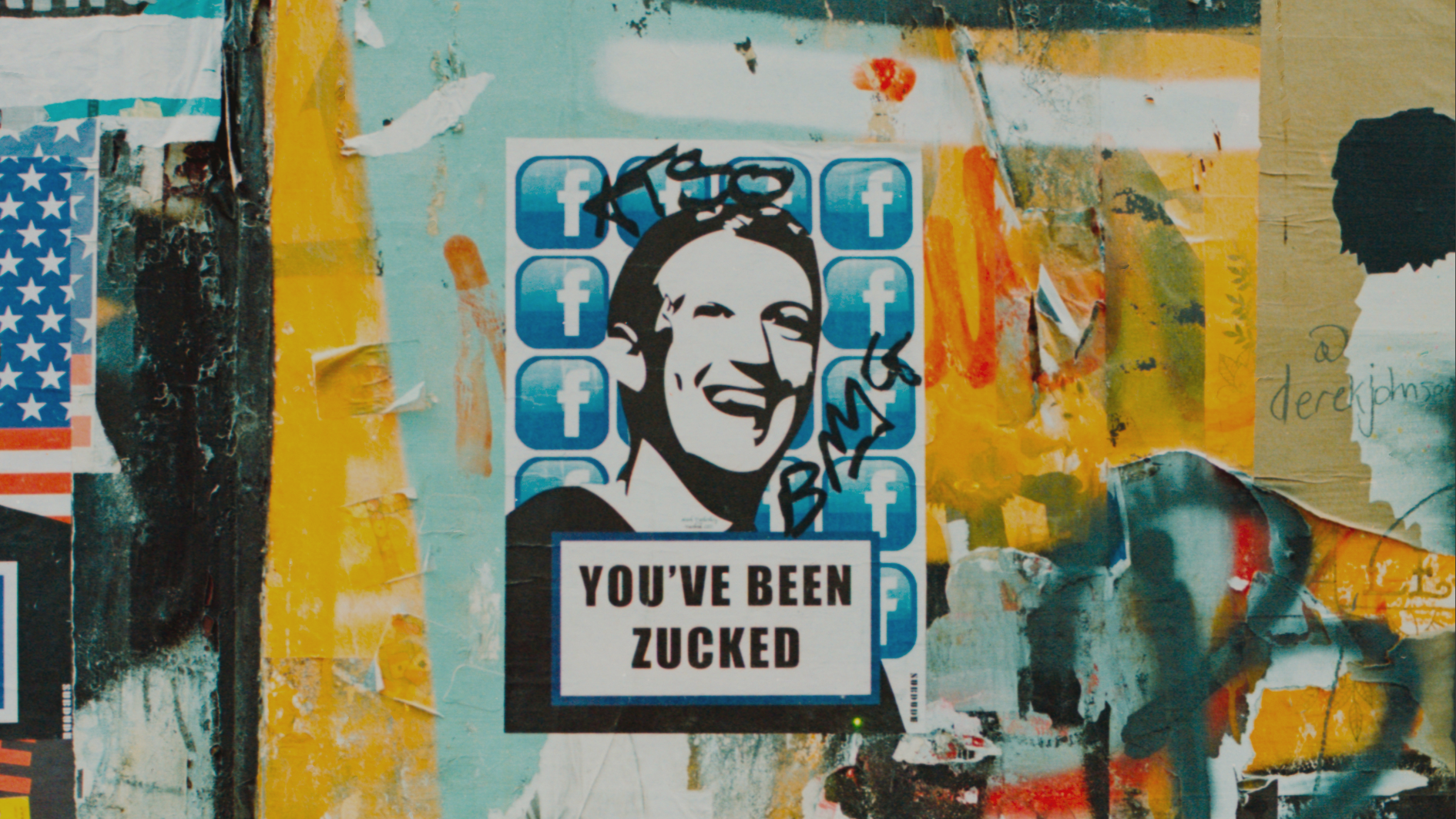

The implications become starker when corporate governance intervenes directly. When X suspended Trump’s account in January 2021, a private corporation curtailed the primary diplomatic communication channel of a sitting head of state. This was not state-to-state coercion constrained by international law, but corporate authority exercised without reciprocal obligation. The decision was arguably defensible. Its significance lies elsewhere: a private platform exercised sovereign-like power over diplomatic communication while remaining accountable only to its own governance structures.

Corporate Territories Without Law

Digital platforms create new political spaces that function as territories in all but name. They are sites where representation, negotiation and influence occur across borders, yet they remain governed by corporate terms of service rather than international law. This authority operates not through direct intervention, but through what Susan Strange termed ‘structural power’: the capacity to shape the conditions under which others make decisions, rather than making decisions on their behalf. Platforms do not dictate what citizen diplomats say. They determine which messages reach millions and which disappear, controlling the environment of diplomatic communication without appearing to govern it at all.

The Palestinian case reveals both the possibilities and vulnerabilities of diplomacy conducted through corporate territory. When Israel banned foreign journalists from Gaza in October 2023, traditional information channels collapsed. Into this vacuum stepped ordinary citizens armed with smartphones and social media accounts. Through his documentation of Gaza, 24-year-old photographer Motaz Azaiza amassed over 16 million followers, eclipsing the reach of most heads of state. This visibility translated into political access. Azaiza met with multiple European foreign ministers to discuss humanitarian corridors not as a credentialed envoy but as a citizen whose algorithmic amplification had granted him near-diplomatic authority at a moment when formal channels were constrained. Enabled by Big Tech, this form of citizen engagement achieved unprecedented reach and tangible impact, culminating in the formal incorporation of citizen documentation into the ICJ proceedings in South Africa’s case against Israel, a first in contemporary international law.

These creators performed the canonical functions of diplomacy: communicating across borders, representing collective interests, and attempting to influence policy outcomes. In doing so, they moved beyond activism into the functional terrain traditionally occupied by diplomats. But these citizen diplomats did so entirely within infrastructures they did not control, whose decision-making is now substantially automated. Azaiza’s account was suspended by Meta for allegedly violating content policies after posting images of injured children. Human Rights Watch’s 2023 investigationdocumented widespread suppression of Palestinian content through takedowns, shadow bans and discriminatory enforcement. These decisions were rendered not by human moderators exercising political judgement, but largely by AI classifiers trained on datasets that systematically underrepresent conflict contexts outside Western norms. The consequences are profoundly political. Yet these systems remain insulated from scrutiny not because no one was watching, but because they operate faster than any human review process can meaningfully follow. In fact, when a Meta engineer attempted to correct wrongful machine flagging of Azaiza’s account, he was dismissed and his lawsuit channelled into closed arbitration.

Agency Redistribution and Asymmetric Dependency

Digital platforms redistribute diplomatic agency while entrenching new dependencies. When Plestia Alaqad, another Palestinian creator with 3.9 million followers, mobilised her followers on Instagram to pressure UK MPs over Gazan students’ visa applications, her campaign achieved tangible policy outcomes. Platform visibility generated media coverage, which sparked parliamentary debate and produced political space for visa approvals. This resembled negotiation but without confidentiality, reciprocity or institutional guarantees. Had Meta’s AI moderation systems classified the campaign as violating community standards, it would have been extinguished before gaining traction. Citizen diplomats wield influence, but only within the narrow tolerance of corporate governance.

This asymmetry defines platform sovereignty. Creators depend on platforms; platforms do not depend on a single creator. States face similar dependencies, but with greater leverage. The EU General Data Protection Regulation and Digital Services Act demonstrate how territorial authority can be deployed against transnational platforms. However, even powerful states struggle with enforcement. Corporations can absorb compliance costs and legal challenges that smaller states cannot, while algorithmic opacity limits meaningful oversight. The result is hybrid sovereignty with asymmetric accountability: states remain bound by democratic processes and international law; citizens enjoy no protection when excluded; corporations exercise governance without a democratic mandate while appearing technically neutral.

The Accountability Chasm

Previous technological innovations accelerated diplomacy without redistributing authority. The telegraph, telephone and television compressed time and space but left sovereignty intact. Digital platforms do something fundamentally different. They create governance spaces that both empower marginalised actors and concentrate unprecedented power in corporate hands. AI intensifies this paradox. Content moderation at the scale Meta operates, billions of posts assessed daily, requires automation. The political decisions embedded in that automation cannot be meaningfully reviewed after the fact. Transparency reports and algorithmic audits reveal patterns; they cannot reconstruct the thousands of individual judgements that determined which conflicts commanded attention during an active war.

The EU Digital Services Act, enacted in 2022 and enforced from 2024, represents the most comprehensive attempt to hold platforms accountable for their governance of public discourse. It requires transparency in content moderation, mandates independent auditing of algorithmic systems, and imposes obligations during periods of crisis. Yet the DSA did not prevent shadow bans, did not make appeals processes meaningful, and did not alter the structural conditions under which citizen diplomacy operates. If the most rigorous accountability framework produced by the world’s largest regulatory bloc proved insufficient to constrain platform sovereignty, the accountability gap is not just a technical problem awaiting a technical solution. It is also a political choice, the result of political will eroded by economic dependency, regulatory capture and market ideology.

The uncomfortable alternative makes this chasm harder to dismiss. China has effectively resolved the tension between platform sovereignty and state authority by subordinating digital infrastructure entirely to party control. If unaccountable corporate governance is the problem, state governance of digital space offers no solution. It merely relocates the power that determines whose voices circulate. The choice is not between accountability and its absence, but between two forms of authority – corporate and state – neither of which has demonstrated the willingness to govern digital political space without suppressing dissent.

Those most excluded from traditional diplomatic infrastructure face the greatest vulnerability to corporate gatekeeping. Palestinians turn to platforms precisely because state-centric diplomacy excludes them. Yet they become dependent on corporations that supply cloud computing and AI services to the Israeli military while moderating Palestinian documentation of that violence. Google and Amazon’s Project Nimbus agreement, valued at US$1.2 billion, provides cloud infrastructure and artificial intelligence directly to Israeli military operations. The same Big Tech corporations that power military targeting systems power the content classifiers that determine which content survives online. This is not neutral mediation. It is governance by proxy, reproducing political hierarchies through technical administration.

The Unfinished Reckoning

Denmark was right to recognise tech giants as diplomatic actors. What remains unresolved is the accountability vacuum that recognition exposes. Corporate diplomacy now extends beyond lobbying and narrative framing into something more fundamental: the direct exercise of decision-making power over the infrastructure diplomacy depends on. When Elon Musk, through SpaceX’s Starlink, demonstrated the practical capacity to switch satellite internet on or off for specific regions, expanding connectivity in Ukraine and Iran according to his own judgement, he exercised a form of corporate diplomatic power that no international framework anticipated. A single individual, accountable to no electorate and bound by no treaty, exercised sovereign-like control over whether communication happened at all. The Vienna Convention codified rules governing diplomacy between states. No equivalent framework constrains how corporations and the tech billionaires who control them exercise sovereign-like control over global communication, visibility and political voice.

The machinery of twenty-first-century diplomacy now operates through infrastructures owned by private corporations whose governance logics are commercial rather than political. AI systems determine which conflicts command attention. Automated content moderation shapes whose testimony circulates. Platform policy shifts can render entire diplomatic strategies obsolete overnight. The ghost in the machine is not artificial intelligence achieving consciousness. It is the persistence of moral authority – witnessing, legitimacy, collective voice – circulating through systems never designed to govern it and captured by actors never authorised to wield it.

Until platforms are recognised as political actors exercising power commensurate with sovereignty and until the AI systems through which they exercise that power are subjected to accountability mechanisms proportionate to their influence, the ghost will remain embedded in the machine – invisible, often unaccountable, and increasingly decisive in determining whose voices matter in international politics. The path forward runs neither through corporate self-regulation nor state capture of digital space, but through democratic legitimacy – imperfect and slow as that path is. Just as the Vienna Convention emerged from states collectively deciding to codify rules constraining their own behaviour, platform governance requires equivalent multilateral will. Democratic institutions, courts and legislatures remain the only actors with both the legitimacy to restrict harmful technologies and the accountability to do so without simply transferring power to those willing to suppress dissent.

Maha Akbar holds a Masters in Diplomatic Studies from the University of Oxford. She is the founder of The Method Lab, a communications firm specialising in strategic storytelling for mission-driven organisations. Previously, she led communications at UN Migration. Her work sits at the intersection of technology, diplomacy, and the politics of narrative.